Secure AI

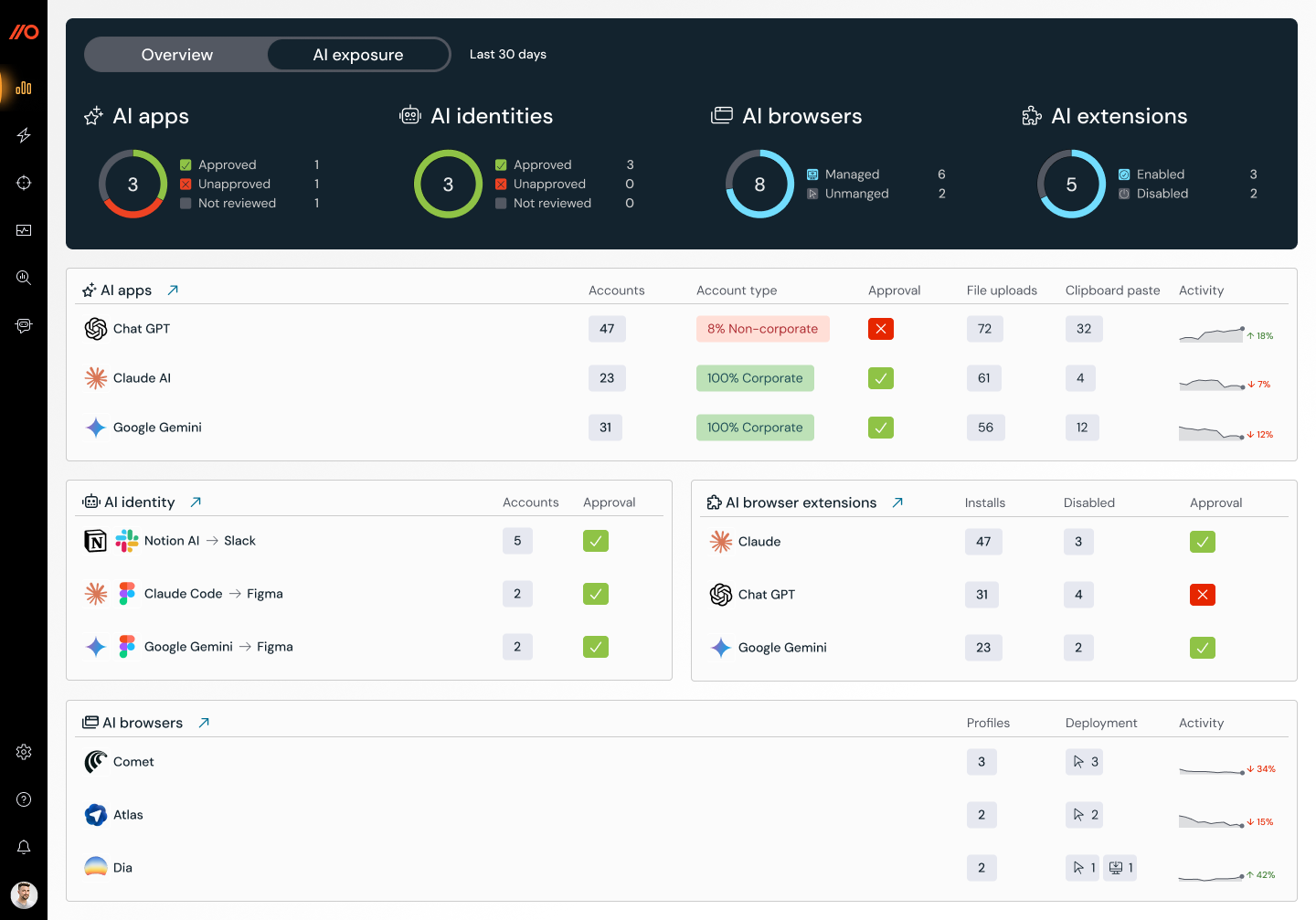

Every AI interaction traverses the browser. Employees use GenAI tools, connect AI apps to corporate accounts, and run agentic workflows, often outside security oversight. Push gives security teams the visibility to see what AI is doing across their environment and the controls to intervene before sensitive data leaves or access gets abused.

- Discover every AI tool and agent active across your workforce

- Detect sensitive data being submitted to AI apps

- Enforce AI policy directly in the browser