What does agentic threat hunting against modern browser-based attacks actually look like? At Push, we built an end-to-end threat hunting and detection engineering capability that uses AI agents as a force multiplier, tripling the number of new detections we’re shipping each month. Here’s how it works.

What does agentic threat hunting against modern browser-based attacks actually look like? At Push, we built an end-to-end threat hunting and detection engineering capability that uses AI agents as a force multiplier, tripling the number of new detections we’re shipping each month. Here’s how it works.

In March, our threat hunting engine flagged something it hadn’t seen before.

Our research team had already been tracking the growing use of malvertising tied to phishing campaigns. Malvertising frequently targets users via Google Search results, inserting malicious ads or redirects in place of legitimate ads, and using the familiar context of the search results page to trick users into clicking.

To defend Push customers against this threat, we needed a way to spot malicious activity arising from clicking on Google ads. But how to separate signal from noise?

Our hunt combined the skills of human researchers and AI agents to find 12 meaningful results from trillions of browser events visible to the Push extension across our install base.

Of those, one was novel.

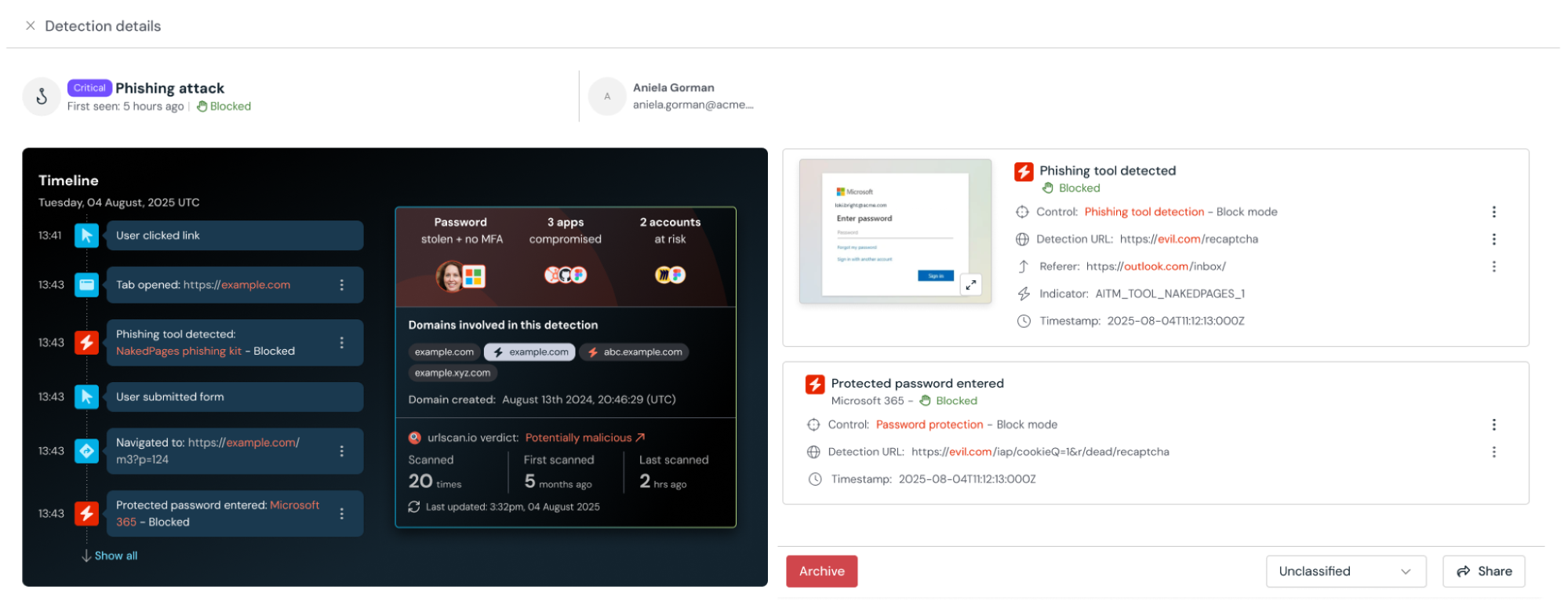

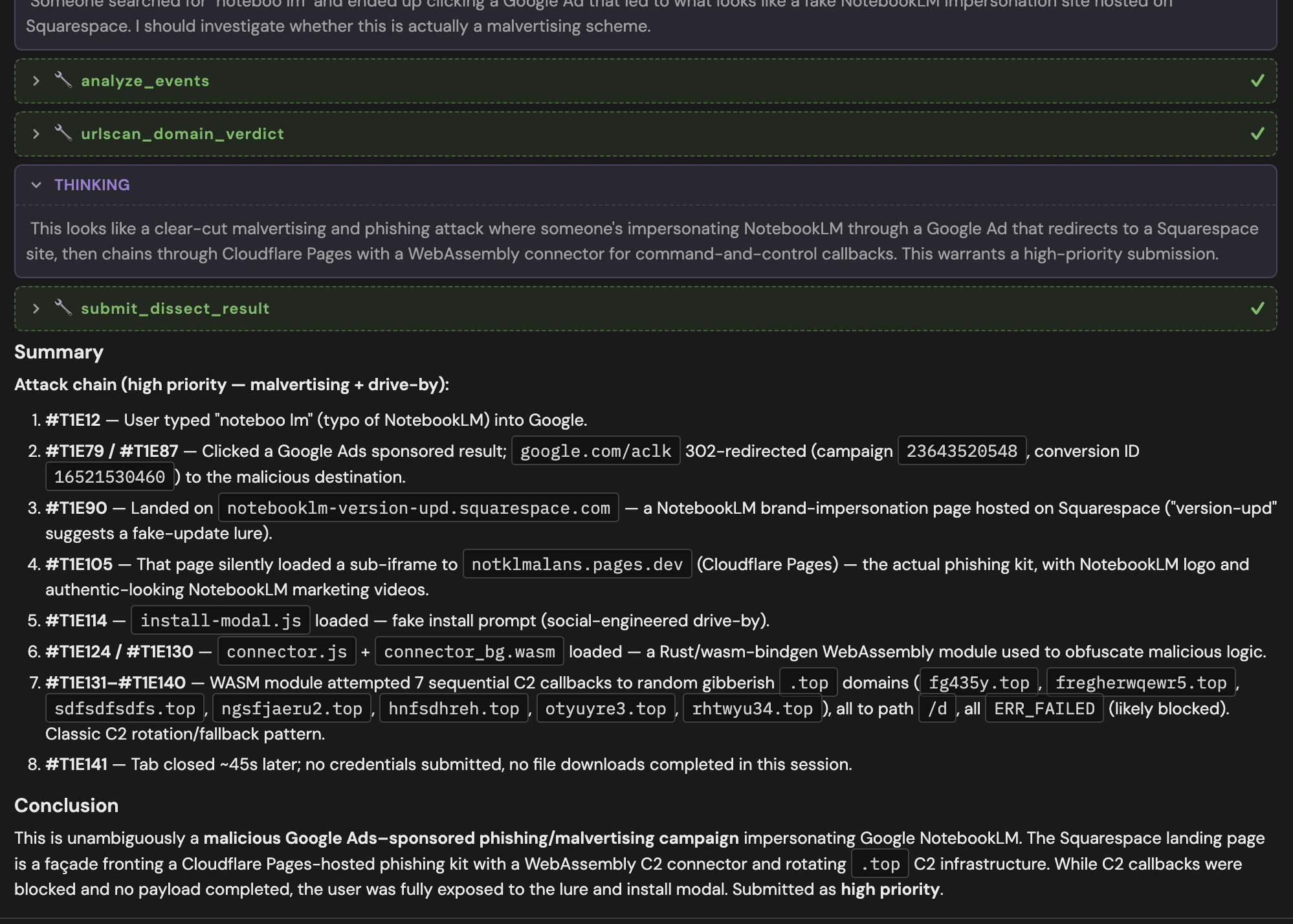

A user had searched for NotebookLM, clicked a paid Google ad, and gotten redirected to a page impersonating NotebookLM. The page itself was just a facade fronting a Cloudflare Pages-hosted phishing kit with a WebAssembly C2 connector. To the user, it looked like a completely on-brand NotebookLM page, and if they had run the fake install prompt, they would have installed malware. (Note: NotebookLM doesn’t even require a local install, but the page was convincing enough — and AI platforms are changing so quickly — that the lure was extremely believable.)

We had found our first in-the-wild InstallFix attack.

Within minutes, our analysis agents created detections, and researchers shipped a new detection to every Push customer.

Eighteen months ago, it would have taken a human analyst days or even weeks to unpack the attack, comb through web requests, de-obfuscate web code, trace JavaScript execution, and extract signals of tactics, techniques, and procedures (TTPs) beyond short-lived single-use IOCs like domain name, then get their work coded up as a detection and deployed to customers.

That was viable when new tools or techniques showed up once or twice a quarter. It doesn’t stand a chance when attack evolutions occur weekly or even daily. That’s the reality now with AI-generated adversary tools.

So, can AI agents replace human threat researchers? That’s the wrong question. Can AI agents massively scale the expertise of a seasoned human threat hunter without getting bored of repetitive tasks, missing pertinent but easily overlooked details, or creating operational siloes dependent on one person’s knowledge — and do its work continuously across trillions of data points? Yes, absolutely.

In this article, we’ll outline how Push uses AI agents as a force multiplier for identifying emerging threats that target organizations via the browser — think: ClickFix, vibecoded phishing sites, AiTM kits, cloned login pages, ConsentFix attacks, malicious OAuth apps, device code phishing, sites impersonating Claude Code installers, etc. — and share what we’ve learned.

We’ll cover the architectural decisions we made that enable the successful implementation of agents and some emerging best practices we’ve identified; discuss why our hunts focus on extracting techniques, not indicators; and illustrate how Push customers are benefitting from this agentic pipeline.

Why scaling browser threat detection requires more than more analysts

Already this year, we’ve tripled the cumulative number of detections shipped to Push customers using this pipeline. That output points to the first problem we set out to solve by employing AI agents: Scaling our research team’s considerable expertise.

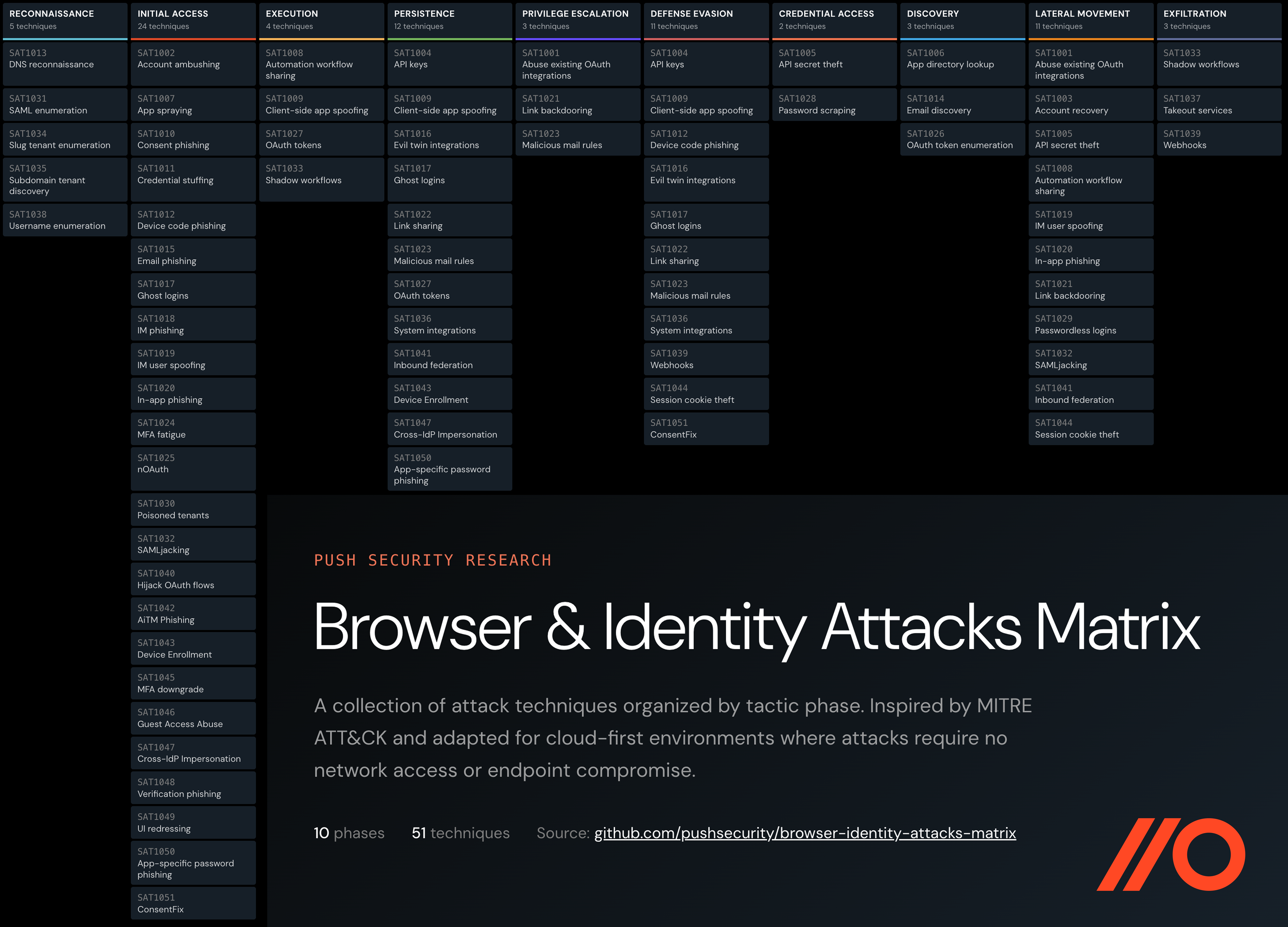

Push’s R&D team are experts at understanding and unpacking modern browser-based attacks. This is essential when you consider how quickly attacks themselves are evolving. When we created the Browser & Identity Attacks Matrix in 2023 (then called the SaaS Attacks Matrix), many of the ideas in it were theoretical. Not anymore.

We’ve tracked the rise of AiTM phish kits from their status as MFA-bypassing novelties to the emergence of an entire criminal ecosystem built around increasingly sophisticated Phishing-as-a-Service tools.

We imagined the simple but effective power of using device code authorization for phishing three years ago; in the last few months, we’ve detected a 37x increase in device code phishing attacks across our install base.

We were also the first to detect a novel post-authorization attack we dubbed ConsentFix that combines OAuth consent phishing and ClickFix-style user prompts; reported on the rise of the ridiculously simple yet effective InstallFix technique described earlier; and detected an array of other creative phishing campaigns tied to malvertising scams.

With an agentic approach, we could scale this expertise and reduce the time it takes to go from technique discovery to production-ready detection. This speed is critical now because adversaries are also using AI tools to do their work, exploding the number of trivial-to-rotate indicators of compromise and overwhelming existing detection workflows that lack an equivalent machine speed.

Push researchers are seeing extensive evidence of LLM use in attacks we detect, from LLM-generated phishing kits and tools to vibe-coded cloned pages, demonstrating how much adversaries have embraced these tools to expedite their work.

In particular, we’ve observed operator-gated payload delivery that greatly reduces the likelihood that malicious sites will be flagged and added to known-bad detection lists because they’re only served to active targets, using gated landing pages, anti-bot checks, and other methods to evade proactive infrastructure scanning. This reinforces the need for browser-based detection at the point the user interacts with the page.

Scaling behavioral detections, not just making bigger blocklists

But output numbers alone don’t tell the story of successful detections. That’s the other problem we set out to solve at scale: Most secure browser solutions rely on detection logic based on blocking known-bad indicators like domains, IPs, and URLs.

If your solution offers 1,000 detections, and they’re all based on known-bad indicators that are easily rotated, then you’ve got 1,000 detections that worked once and will likely never fire again. They certainly won’t catch subtle adaptations in adversary techniques that don’t rely on infrastructure changes, which are easy for attackers to swap anyway.

Push does it differently. Our detection engine is focused on hunting for tactics, techniques, and procedures: the behavioral fingerprints of an attack, not just the infrastructure it runs on.

Instead of blocking based on known-bad domains, URLs, and IPs, our detections are built around user-level and page-level behaviors like what scripts load, how redirects behave, what events fire, what actions a user takes and what happens next, etc. (In fact, Push detections don’t even use any infrastructure-based IOCs, though customers can write their own custom detections if they have a specific IOC they’re keeping an eye on.)

All the detections we write would survive infrastructure rotation by adversaries, and many of our existing detections have caught never-before-seen evolutions in TTPs. That’s because we focus on the top of the Pyramid of Pain, the indicators that are hardest for attackers to change.

This focus on detecting TTPs has always been our approach. But with the acceleration in both attack types and the ease with which adversaries rotate infrastructure, we needed to build capabilities that scaled our knowledge.

We did this not by replacing researchers, but by continuously activating their expertise.

Core principles for agentic threat hunting

Three principles make Push's agentic threat hunting and detection engineering pipeline work:

Context matters more than custom models

We’re not AI researchers; we’re security researchers — we aren't trying to compete in building the most intelligent models. And in our view, AI models are quickly becoming commoditized like cloud infrastructure, anyway. Luckily, the commercial models today already excel at understanding web code. We just need to harness their power with our expertise.

So at Push, we use a variety of commercial AI models and tools in complementary ways. What matters most is the telemetry they analyze, and that’s where Push’s existing product infrastructure shines: We’re already deployed into over 3 million browsers worldwide, and the Push browser extension includes a component that operates as a flight recorder to locally record everything that matters inside a browser session.

This universe of metadata — DOM elements, tab context, script execution, network traffic, user actions, credential entry, etc. — becomes the searchable corpus for hunts. Metadata is stored locally in users’ browsers and only queried during targeted threat hunts.

This approach avoids dragnet collection of sensitive data. Instead, we focus on collecting metadata and distilling that into patterns and insights that provide context for agents to perform their analysis. This means that Push also does not train or fine-tune models on customer data.

Agents are only as good as the context you give them. Good context is researcher-led

AI agents don’t know how to identify the TTPs of browser-based attacks until you give them the right context, and Push researchers have spent years unpacking these techniques and tools. Agents at Push consume our internal knowledge base of identified TTPs, and both humans and agents perform meta-analyses to check their work. The agents have access to large libraries of traces of human interactions with real phishing kits. This is a powerful dataset to build on.

When we don’t get the results we want from AI models, the question is “What context is it missing? What does our human team know that the agents don’t, and how can we give them that context — do they need data, tools, better workflows?” That closes the gap in performance and keeps quality high.

Integrated architecture that makes agentic AI the throughput layer, not a bolt-on

The constraint we’re trying to break by using AI isn’t knowledge, it’s throughput. Our researchers deeply understand the techniques and tools. An agentic pipeline can apply that understanding continuously across millions of browsers and trillions of events, ingest new external signals, generate hunt hypotheses, triage results, and return only the findings that warrant escalation.

This approach relies on tight integration of our product and our agentic workflows. We’ll take a closer look at that in the next section.

How the agentic detection pipeline runs

Now let’s look at how agentic threat detection actually works, and some of the emerging best practices we’ve identified. We'll cover two example hunts, one initiated autonomously by the agents themselves, and one by our research team.

Example 1: Autonomous threat hunt

Push’s threat hunting pipeline ingested context from research articles describing a new attack technique, and an agent developed hypotheses on what to hunt for across Push’s install base to identify instances of this attack.

The agent crafted detection queries and then refined them to reduce false positives. The successful query ran across stored metadata and returned results, validating that there were zero false positives.

The validated query became a scheduled job that runs on a regular cadence to monitor for potentially malicious signals. A triage agent then received any matches, did an initial analysis, and passed anything that looked suspicious to another agent to perform deeper analysis. This deep analysis agent wields the full investigative toolkit that a human researcher would — using Push’s internal knowledge base, domain age and registration analysis, URLScan and whois lookups, DOM image analysis, and contextual analysis of page-level and user-level behaviors, etc.

Within a few minutes, it can filter a thousand or more signals in a hunt trace down to a handful with meaning and provide an actionable assessment. Then, once the TTP was well-understood, other agents wrote and refined detections that can raise alerts for customers when an event of this type is seen. The Push platform immediately applies the customer’s configured security controls, such as blocking users from interacting with malicious pages.

Example 2: Human-initiated threat hunt

Now, going back to the example from the beginning of the article: InstallFix. This hunt started with a thorny problem our research team needed to solve: How to detect bad things downstream of a user interacting with a Google ad? We needed a way to pinpoint the bad links from the good ones.

Our researchers collaborated with agents to formulate the right parameters for hunt queries, taking into account that good ads are normally bought by companies with marketing budgets, so therefore ads will be expected to redirect to pages hosted on custom domains, not shared domains like *pages.dev, *workers.dev, *squarespace.com, etc.

Our AI agents already understood key TTPs that indicated potential maliciousness on a page: password prompts, file downloads, OAuth integrations, clipboard copies, and similar user prompts that are frequently abused.

The agent ran several queries that returned matching browsing traces — the term we use for sequences of events in a session or tab context — where the user clicked a Google ad, was redirected to a page on a shared hosting domain, and then clicked a button to copy content to their clipboard.

We got back high-fidelity findings and then tuned the query into a continuous detection that leveraged existing detection logic around related techniques. This process also effectively back-tests new detections, so we know we’re not going to generate a lot of false positives. Result: A new detection against a new technique, plus several improvements to existing detections.

What infrastructure is needed for agentic threat hunting?

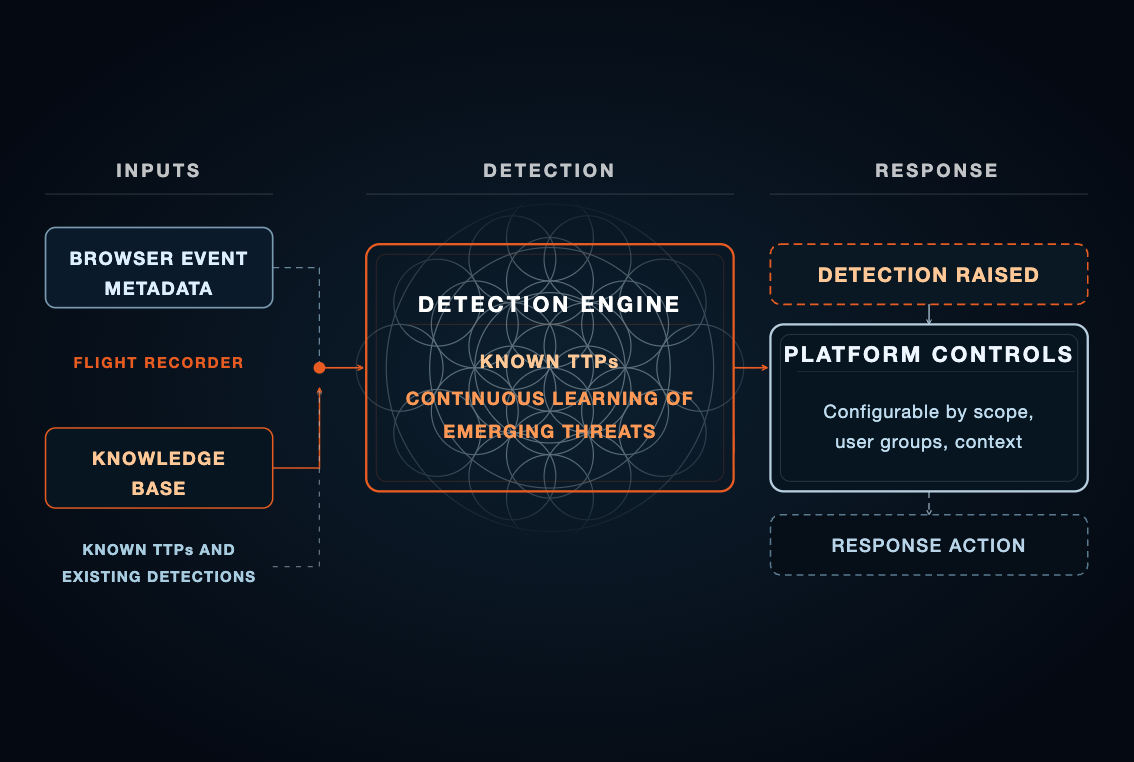

Both of these examples illustrate the end-to-end workflows supported by this pipeline. From an infrastructure perspective, you can think about the pipeline as composed of:

A flight recorder: The Push extension-powered capability that collects and locally stores browser event metadata from users’ browsers.

A knowledge base: Structured knowledge about what Push knows about TTPs and its existing body of detection logic, as well as externally sourced signals of new attack trends.

Agents as tools: Role-segmented agents that work as a team to triage, investigate, develop hunt queries, return analyses, write detections, and review each others’ work for completeness and accuracy.

Humans in the loop: Human researchers who collaborate with agents to initiate hunts and tune detections.

Platform controls: The Push administrator-configured controls that specify how to respond to detected events like AiTM phishing, tuneable by scope, user groups, browser profiles, apps, etc.

What are the best practices for agentic threat detection?

To be effective, agents must specialize and focus. This is the agents as tools concept. When we’re asking AI agents to take massive amounts of data and make a high-level decision about a signal in observed browser events, they must work as a team, finding intelligent ways to condense information without losing important context or hallucinating.

Creating a hierarchy of agent jobs — including agents to perform meta-analyses to catch mistakes and verify conclusions — makes the agents effective by giving them a manageable focus that controls the size of context windows.

Use agents as an excuse to operationalize your internal knowledge once and for all. Every security team has a venerable silo of knowledge — that one person who just knows how to do that one major thing. Now, that can be an AI resource accessible to all, at any time, whenever you need it most.

Creating an agentic workflow requires operationalizing your internal knowledge in a repeatable and trustworthy way. Sharing rich context from human discoveries is the key to getting the best results out of agents.

It's vital too that the agent uses privacy-preserving methods and infrastructure. The Push agent is designed to respect customer and user privacy while enabling high-fidelity detections. We do this by collecting broad browser metadata but storing it locally in users’ browsers and only querying that metadata during active threat hunting investigations.

The compounding effect and how it benefits Push customers

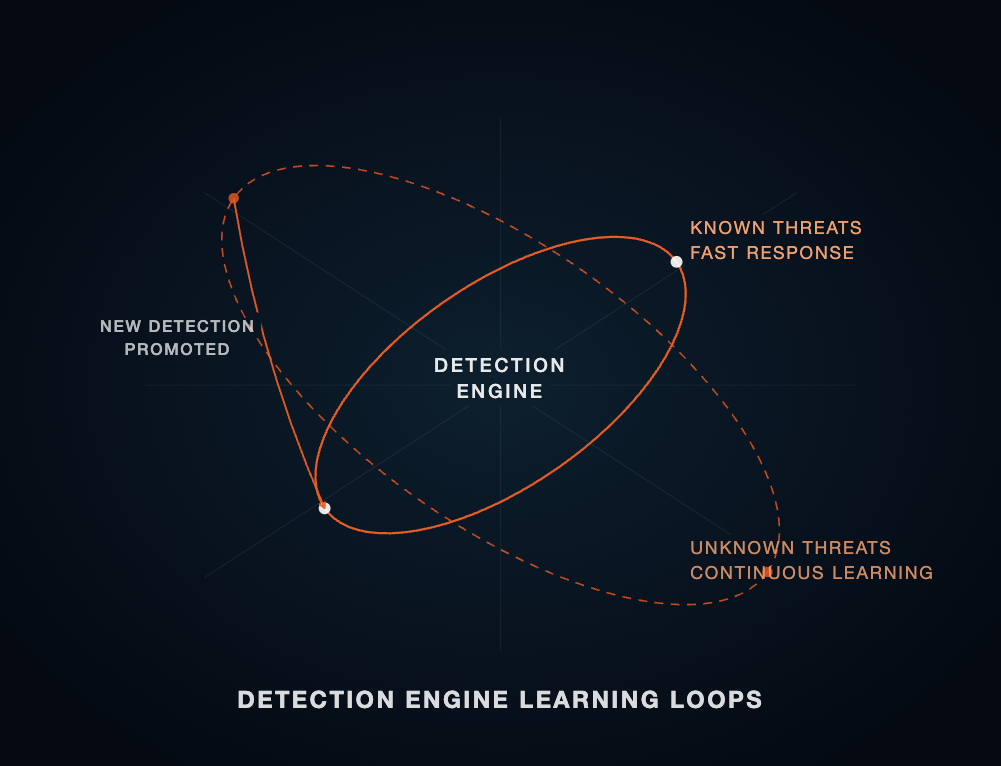

At Push, we think about our detection capability as two learning loops with a compounding effect: An inner loop that serves as our real-time detection and response engine for known attacker techniques, and an outer loop that is the continuous learning our agents do as they hunt for new threats, analyze emerging behaviors, and create new detections.

The outer loop feeds the inner loop, and vice versa.

Customers benefit from this approach because it means they:

Regularly receive ready-made detections against both known and emerging browser-based threats, without having to write their own detections. (Push also provides the ability to write your own custom detections, too, for environment-specific use cases.)

Can configure Push’s response actions based on their security goals and environment. Agents act as the threat-hunting and detection engineering team; Push customers set the thresholds for how they want to respond. For example, customers can use Push controls to block all AiTM phishing attacks (or even carve out exceptions for their own incident responders to be able to visit malicious pages with just a warning), and agents continually feed new indicators into detection logic for that class of attack.

Get pre-digested and actionable intelligence from every detection, with extremely high fidelity.

This all equates to your own advanced browser threat protection, without requiring the specialized in-house expertise we’ve spent years building.

If you’re a Push customer, you already know that we regularly collaborate with security teams to identify and refine detection use cases, and assist with investigations. In the past few months alone, we’ve worked closely with teams targeted by device code phishing, and InstallFix and ClickFix campaigns, among others.

If you’re not a customer and are curious about how Push’s agentic threat hunting and detection engineering capabilities can address your use cases, please get in touch.

Learn more

Push Security is the most powerful AI-native security tool in the browser. Think EDR, but for the browser — high-fidelity telemetry and real-time control across every session, on every device, with no browser migration required.

Security teams use Push to detect and stop advanced browser-based attacks like AiTM phishing, ClickFix, and session hijacking; gain visibility and control over AI tool usage across their workforce; harden identities by surfacing credential reuse, SSO gaps, and shadow IT; and support data loss and insider investigations with browser-layer telemetry that other tools can't see.

Book a live demo to learn more.