Why typical browser extension risk scores are poor predictors of which extensions could actually lead to a compromise.

Why typical browser extension risk scores are poor predictors of which extensions could actually lead to a compromise.

TL;DR: Traditional extension risk scores — based on data like permissions, store metadata, code analysis, and developer reputation — are poor predictors of which extensions will actually lead to a compromise. The extensions behind every major breach of the past 18 months scored as normal or low-risk beforehand.

If your strategy is "remove the highest-risk extensions," you're optimizing for the wrong question. The more effective approach is to implement an allowlist, block the rest, and monitor the approved set for the changes — like ownership transfers and permission escalations — that actually precede real-world attacks.

Browser extensions have become one of the most talked-about attack surfaces in security over the past 18 months, and understandably so — a string of high-profile supply chain compromises have collectively impacted tens of millions of users since late 2024 (Cyberhaven, DarkSpectre, Trust Wallet, among many others).

But as the industry scrambles to respond, there's a tendency to treat browser extension management as an entirely new paradigm that requires a new approach, particularly risk scoring systems that attempt to rate each extension on a spectrum from safe to dangerous.

We think this framing misses the point, and that it's leading security teams toward a strategy that won't protect them from the attacks that actually cause damage.

The practical problem: "just remove the high-risk ones" doesn't work

The strategy we see most often is some version of "identify and remove the highest-risk extensions." On the surface this seems reasonable — you can't address everything, so you prioritize. The problem is that it doesn't materially reduce your exposure to the attacks that are actually happening.

Browser extension attacks almost always follow one of two patterns:

A legitimate developer is compromised through consent phishing, session theft, or AiTM phishing, and a malicious update is pushed to the existing user base. Cyberhaven is a good example of this — a developer got consent phished with a specific app that granted the attacker access to the extension store.

An attacker builds or acquires a clean extension, operates it legitimately until it accumulates a sufficient user base, then deploys a malicious update. GitLab's threat intelligence team documented a cluster of where access had been acquired from original developers rather than via compromise.

This means that real-world extension breaches aren't coming from extensions that looked risky beforehand. If your strategy is "identify and remove the highest-risk extensions," you're optimizing for the wrong thing — because even extensions that score as moderate or low risk by every conventional measure still have the permissions and access needed for a full compromise. If you skim off the top 10% “riskiest” extensions, 90% of the extensions in your environment could still become a breach vector.

What risk scoring is designed to measure — and why it can’t predict future compromise

Most extension risk scoring systems evaluate some combination of permissions, install count, user ratings, code analysis, developer reputation, and web store trust signals. Nice-to-have data points, but with a common limitation: they describe the extension as it is today, not what it will become after the next update. That makes them poor predictors of the thing that actually causes breaches — a previously-clean extension being weaponized through a supply chain compromise.

It's worth examining why each signal falls short as a predictor specifically of future compromise, because the failure modes are different and well-documented.

Permissions

Permissions are the most meaningful input to a risk score, because they determine what an extension is capable of doing if it turns malicious. An extension with access to cookies, scripting, and broad host permissions can steal session tokens, log keystrokes, and exfiltrate data from any site the user visits. This is the data that actually answers the question "what could this extension do to us if it went bad?"

The problem is that these permissions are extraordinarily common. We analyzed a sample of 20,000 unique extensions deployed across Push customers and found that 46.76% have the permission combinations needed to perform account takeover with no user interaction.

These figures also understate the real exposure. One of the most straightforward attack techniques involves injecting content scripts into web pages to hook request functions and extract cookies. The user-facing warning Chrome shows for this capability — "Read and change all your data on the websites you visit" — is the same generic string shown for ad blockers, password managers, and translation tools.

You can't practically remove everything that could be dangerous, because that includes most of the extensions people actually use for work. And if you set the threshold lower to keep the list manageable, you're excluding extensions that have the same permissions and pose the same theoretical risk.

Install counts, ratings, developer reputation, and web store badges

These signals share a common failure mode, so it's worth addressing them together: they all describe the extension's reputation at a point in time, and attackers have both the means and the incentive to ensure that reputation looks clean.

Install count is sometimes used as a proxy for trustworthiness, on the assumption that widely-adopted extensions are more likely to be legitimate. In practice, high install count is often a precondition for the attack rather than a signal against it.

Attackers who acquire or build extensions are specifically waiting for the install base to grow before weaponizing — what researchers are calling the "sleeper agent" strategy. Install counts can also be easily inflated with bots, meaning that using them as a positive risk signal actively rewards the attackers who are best at gaming the system.

The DarkSpectre campaign accumulated over 8.8 million compromised browsers across extensions that held "verified" status and healthy install counts throughout a seven-year operational period.

The AITOPIA impersonation extensions had over 900,000 combined installs and a Google "Featured" badge.

Cyberhaven had approximately 400,000 users at the time of compromise.

User ratings suffer from the same problems. Attackers use bot networks to generate positive reviews, and even genuinely clean extensions will carry good ratings right up until they're compromised. By the time users start leaving negative reviews the attack has already run its course.

Developer reputation and "Featured" and "Verified" badges fail for a related but slightly different reason: the attack typically doesn't come from a known-bad developer. It comes from a reputable developer whose account has been compromised, or from an extension that has changed hands.

Extensions compromised in the broader campaign impacting Cyberhaven had been legitimate, well-maintained tools with strong developer reputations before the developer accounts were phished. Likewise, the QuickLens and ShotBird ownership transfer attacks in March 2026 involved extensions acquired through a legitimate marketplace, with malicious code introduced after the sale. Developer reputation at time of installation told you nothing about the developer at time of attack.

The net result across all of these signals is that the extensions most likely to appear in breach headlines — established tools with large user bases, good ratings, verified badges, and reputable developers — are precisely the ones that risk scoring would rate as low-risk.

Code analysis

Static analysis of extension code is the approach that sounds most rigorous, and it's the basis for Chrome Web Store's own review process. Google operates a hybrid system combining automated analysis and manual review, with manual review typically reserved for submissions that trigger specific signals such as sensitive permissions or large code volumes.

But attackers have developed reliable techniques to pass these checks, and the specific evasion methods used in major campaigns illustrate why static analysis consistently falls short.

The Cyberhaven compromise used dynamically loaded content fetched from a remote server via service workers, with the C2 infrastructure delivering different malicious configurations to different end-users — meaning that even if a scanner fetched the remote payload, it might receive a benign configuration depending on the target profile.

The GhostPoster campaign (part of the broader DarkSpectre operation) took evasion further still: the extension waited 48 hours between configuration check-ins and only loaded a malicious payload 10% of the time. No sandbox is running for 48 hours, and a 10% activation rate means that nine out of ten analysis runs would see nothing at all.

These are not outlier techniques. Across the major campaigns documented since late 2024 — including the 108-extension campaign discovered in April 2026 — some combination of dynamically loaded payloads, conditional execution, time-delayed activation, and base64-encoded endpoints has been present in virtually every case. If these techniques are bypassing Google's own review infrastructure, which has both the scale and the incentive to detect them, they will bypass third-party code analysis tools as well.

It's also worth noting that Chrome Web Store policy explicitly disallows code obfuscation, precisely because it makes review impossible. The fact that attackers have found ways to hide malicious behavior without technically obfuscating their code speaks to the fundamental asymmetry at play: the attacker controls when and how malicious functionality appears, and static analysis can only evaluate what's present at the time of review.

But extension scores combine all of these things …

The obvious counterargument is that no serious risk scoring system relies on any single signal in isolation — the value is supposed to come from combining permissions, install count, ratings, code analysis, and developer reputation into a composite score that's more predictive than any individual input. In theory, this sounds like the right approach: weak signals aggregated together should produce a stronger signal.

In practice, combining signals that are individually unable to predict supply chain compromise doesn't produce a signal that can. Aggregating a set of backward-looking indicators doesn't make the aggregate forward-looking; it just gives you a more detailed description of the present state, which is the state before the attack has happened. No weighting or combination of install count, code behavior, and developer reputation would have flagged Cyberhaven, or DarkSpectre, or Trust Wallet before the malicious update shipped, because at that point every input to the composite score was returning a legitimate value.

Meanwhile, the indicators that do predict real-world compromise — an extension changing ownership, a developer account being phished, an update introducing behavior that wasn't present in prior versions, or an extension being explicitly confirmed as malicious through threat intelligence — aren't predictive risk score inputs. They're discrete events that require monitoring and an immediate response, not a recalculated number on a dashboard. This is an important distinction: the signals that matter are changes over time, not static attributes at a point in time, and they call for a detection-and-response workflow rather than a periodic risk review.

What works instead

If the goal is to reduce your exposure to extension-based supply chain compromise rather than to generate a ranked list of risk, the approach is operationally straightforward — even if it requires more discipline than deploying a scoring dashboard.

Reduce your attack surface through allowlisting

Build a complete inventory of every extension running across your environment — what's installed, how it got there (managed deployment, manual install, sideloaded, developer mode), what permissions it has, who's using it, and whether it serves a legitimate work purpose. Then create a strict allowlist of vetted and approved extensions and block everything else.

This is the same default-deny approach that's been best practice for firewall policy and endpoint allowlisting for decades. In Push, it works like building a firewall rule: a global block rule at the bottom that disables all browser extensions, with explicit exceptions above it for approved tools. Users who attempt to install unapproved extensions see a block screen.

The key insight is that every extension you don't really need, but haven't blocked, is attack surface that exists for no business reason. Most organizations are surprised by how many of the extensions in their environment are unused, forgotten, or have readily available alternatives. Reducing the population of installed extensions to only the ones that serve a genuine work purpose is the single most effective thing you can do — and it doesn't require a risk score to accomplish.

Monitor for changes that indicate weaponization

Once you have a controlled baseline, the risk shifts from unmanaged installations (those are blocked) to changes in the extensions you've already approved. These are the signals that map to real-world attack patterns and serve as leading indicators of weaponization:

Ownership changes — an extension changing hands is one of the most reliable precursors to supply chain compromise, as demonstrated by the QuickLens and ShotBird attacks and the acquired-extension clusters documented by GitLab.

Developer contact information changes — often an early indicator that an extension has been sold or that a developer account has been taken over.

Permission escalations in updates — a previously-scoped extension suddenly requesting broad host permissions or cookie access.

Delisting from the web store — can indicate that the store's review process has caught something, or that the developer has abandoned the extension.

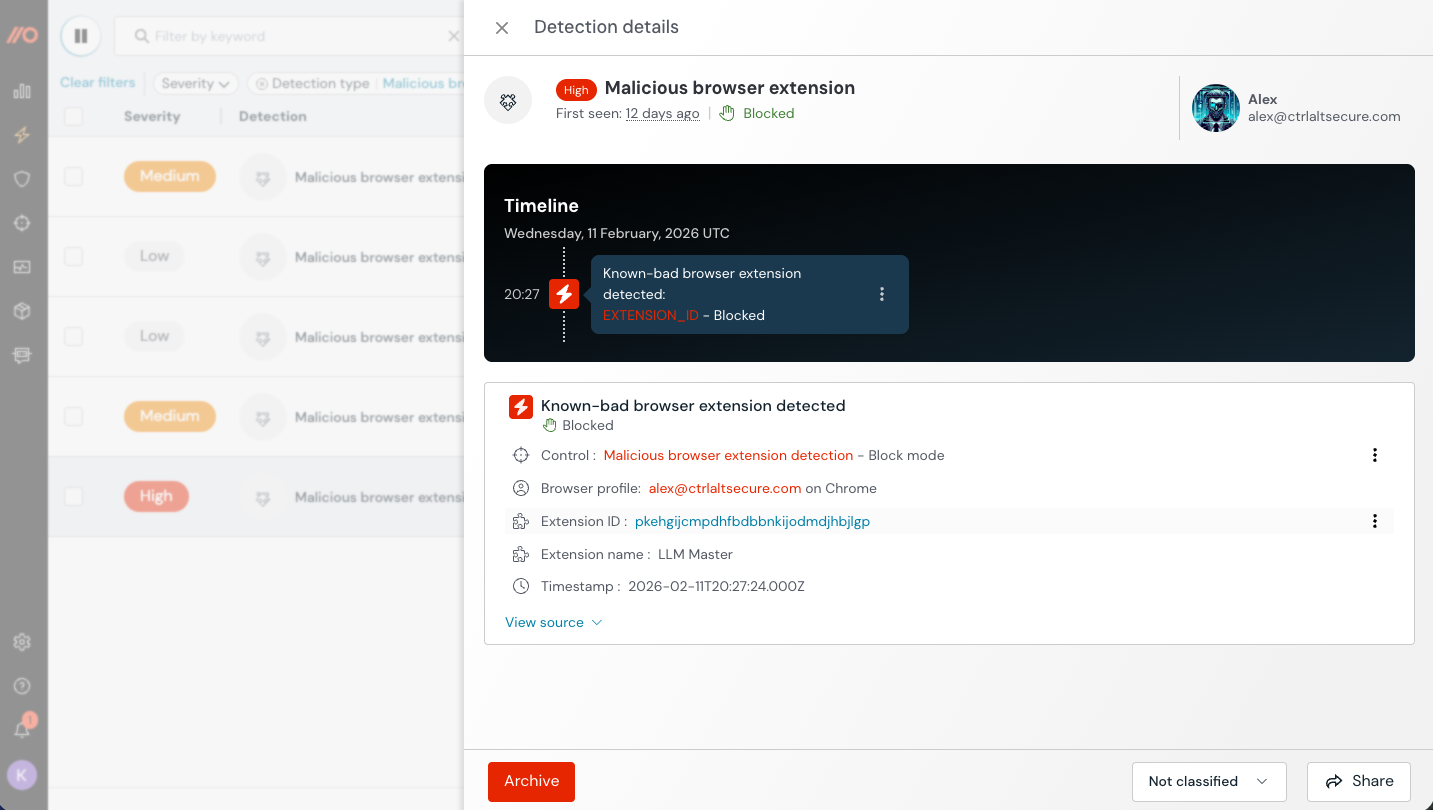

Known malicious classification — when an extension is confirmed as weaponized or linked to an active campaign through threat intelligence. (Push blocks known-bad extensions automatically).

To make this concrete: Push emits structured events via webhook whenever extension metadata changes that captures all of the variables above. These these can be fed directly into your SIEM or SOAR workflows, making it easy for security teams to detect when a meaningful change occurs. An ownership change on its own warrants investigation; an ownership change paired with a new version and added permissions warrants an immediate block pending review.

Push detects these changes in real time and can automatically block an extension when a meaningful risk indicator fires, before the damage propagates. This is fundamentally different from a periodic risk score: rather than attempting to predict which extensions might go bad based on static attributes, Push monitors for the specific events that precede or accompany weaponization in the attacks we've actually observed.

The bottom line

Traditional extension risk scores — based on permissions, store metadata, code analysis, and developer reputation — are poor predictors of which extensions will actually compromise you. The extensions involved in the major breaches of the past 18 months consistently scored as normal or low-risk right up until the moment they were weaponized. If your extension management strategy is built around "identify the riskiest extensions and remove them," the extension that gets you is the one that wasn't on the list.

Browser extensions are software. They're third-party code running with significant privilege inside the browser, capable of reading and modifying page content, accessing cookies and session tokens, and interacting with virtually every web application your employees use. Like any other software dependency — OAuth integrations being another relevant recent example in public breaches — each one expands your attack surface.

The principles behind managing browser extensions need to be the same as any other software — default-deny, build an allowlist, monitor and maintain that allowlist. This might trigger some PTSD for security teams, but it shouldn’t. On the endpoint, application allowlisting has always been operationally painful — diverse workflows, unpredictable application needs, and the overhead of vetting every binary made it impractical for most organizations outside of high-security environments. In the browser, it’s not that serious. You’re not going to brick an endpoint by blocking a third-party browser extension.

The browser is one of the few environments where an allowlisting approach is both technically feasible and operationally lightweight: use it to your advantage.

Push detects and blocks malicious browser extensions, and gives security teams the controls to enforce an extension allowlist and monitor for risky changes across every browser in the environment. Combined with protection against AiTM phishing, ClickFix attacks, session hijacking, and stolen credentials — plus proactive hardening for ghost logins, SSO coverage gaps, MFA gaps, and vulnerable passwords — Push provides browser-native visibility and control where it matters most.

To learn more about Push, check out our latest product overview, view our demo library, or book some time with one of our team for a live demo.